ChatGPT dropped in November 2022. Schools met. Schools wrote policies. Schools debated bans. Students did not wait.

While leadership hoped teachers would get comfortable with the technology, kids quietly started using AI on their own. Just like social media, they used it without discretion. It became part of their lives before it became part of our lesson plans.

Now, AI is no longer a destination website you have to navigate to intentionally. It’s baked into phones, Google Docs, social media feeds, and search bars. We can no longer wait for professional development while technology becomes unavoidable.

Bans Don’t Build Judgment

In most schools, teachers mainly noticed the cheating. So the response was predictable: ban it, block it, police it.

But AI literacy does not equate to a ban. It belongs in a lesson plan.

If students are already using AI outside school, a ban just guarantees they’ll use it without guidance. And bans create a hidden equity gap. Students with tech-savvy parents and paid subscriptions will learn to use AI as a powerful tutor at home, while students without those resources fall further behind.

We need to stop asking “How do we keep them from using it?” and start asking: “How do we teach them to question it, verify it, and think with it instead of letting it think for them?”

“You’re not just giving kids a tool. You’re getting a window into their minds.”

What This Actually Looks Like in a Classroom

AI literacy is not handing every kid a ChatGPT account and calling it innovation.

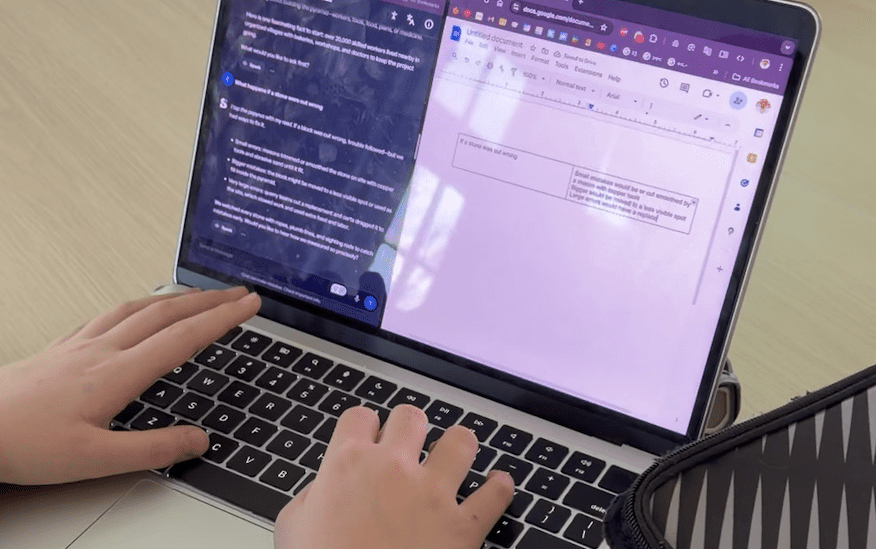

The better move is teaching AI literacy through guided moments within real learning, with guardrails. Platforms like SchoolAI, Brisk, or Flint let teachers build guardrail experiences where students get instant feedback, practice evaluating outputs, and strengthen their reasoning — without replacing teaching.

Here’s a concrete example. Your class is studying the pyramids of Giza. A teacher builds a focused chatbot that only discusses that unit’s content. Students can explore in any direction, but within a safe boundary. And while they’re asking questions, you’re seeing their questions — what they’re curious about, what they misunderstand, who’s going deeper, and who’s stuck.

That data helps fuel your next instructional moves.

A Simple Sequence Any Grade Can Run

You don’t need a new course. You need repeatable habits woven into real assignments.

Start by having students evaluate an AI-generated response connected to current content. What looks accurate? What feels vague? Whose perspective might be missing? Then teach them to improve their prompts — not to get the answer, but to deepen thinking.

Build verification as a default: pick one claim, find a trusted source, confirm or contradict it. Then have students reflect on what they accepted, what they rejected, and why.

Replicate that in small bursts throughout the year. Tie it to real content. Make it normal. That’s how literacy is built — not with a one-time assembly or a policy PDF, but with routines.

I’ve been teaching since 1996. This moment isn’t about moving faster. It’s about moving intentionally.

If we do this well, we don’t just reduce cheating; we also improve learning. We increase thinking, gain better insight into students’ understanding, and build safer habits before bad ones take root.

If you want to go deeper on using AI safely and intentionally with kids, I’ll be giving a keynote on just this at Spring CUE powered by CALIE next month. Hope to see you there.

Holly Clark is a California educator who works with schools around the globe on their intentional use of AI. You can follow her story on social media at @HollyClarkEdu (Instagram and Linkedin) and on The Digital Learning Podcast or by reading the AI Infused Classroom.